Job description

Lead Data Platform Engineer (5873)

We’re a national law firm with a local reach. Our legal experts are here for you. Whether it’s personal or business, we understand that everyone’s situation is different. But we’re more than just a law firm – we’re a team of people working together to help individuals and businesses navigate life’s ups and downs. Working here you’ll feel a part of our friendly and inclusive environment.

We’ll value you for who you are and what you bring. We support each other and push boundaries to achieve incredible things and make a real difference to our clients and communities.

We're always looking to support our colleagues to work in a way that works best for them and everyone else, including our clients, the business and the regulators. Please speak to a member of our Recruitment and Onboarding team for more information.

Role Overview

We are seeking a highly skilled Lead Data Platform Engineer to join our Data Engineering and Machine Learning team. This role is pivotal in designing, architecting, and delivering robust, scalable, and secure data platforms that enable the firm to manage, analyse, and leverage data effectively while meeting regulatory and client confidentiality requirements.

You will combine hands-on engineering with strong solution architecture skills, ensuring that data platform solutions are fit-for-purpose, well-governed, and aligned to business needs. A key focus will be on Databricks, Azure Data Factory, and the Lakehouse Medallion architecture, with DevOps and automation at the heart of everything you do.

Key Responsibilities

- Develop and deploy Data Lakehouse platform in Azure Virtual Network environment

- Develop and maintain automations to manage cluster and self hosted integration runtime virtual machines and networking related to it.

- Architect and design end-to-end data platform solutions, ensuring scalability, reliability, and compliance.

- Lead implementation using Databricks, PySpark, Spark SQL, and Azure Data Factory.

- Develop APIs for data integration and automation.

- Write efficient, maintainable code in PySpark, Python, and SQL.

- Implement and manage CI/CD pipelines and automated deployments via Azure DevOps.

- Build infrastructure-as-code solutions (Terraform, ARM templates) for cloud resource provisioning.

- Monitor and optimise platform performance and manage cloud costs.

- Ensure data quality, security, governance, and lineage across all components.

- Collaborate with data engineers, architects, and business stakeholders to translate requirements into effective solutions.

- Maintain comprehensive documentation and stay current with emerging technologies.

- Provide coaching and mentoring to engineers, fostering a culture of continuous learning and technical excellence.

Essential Skills

- Advanced expertise in DevOps practices: CI/CD, automated testing, code quality, and release management for Azure infrastructure and Data Lakehouse assets (ADF Code, Databricks Asset Bundles, and Databricks terraform)

- Experience with infrastructure-as-code tools (Terraform, ARM templates) for cloud resource management.

- Familiarity with monitoring, logging, and automated alerting solutions (e.g., Azure Monitor).

Desirable Skills

- Experience with additional Azure data services (e.g., MS Fabric, Azure Data Factory, Azure Databricks, Azure Databricks Platform Administration, Azure Virtual Networks, Azure Express Route, Network Security Groups, Routing, Azure Virtual Machines, Azure Functions, Azure Logic Apps).

If you’re interested in this role but don’t feel that you meet every requirement, we still encourage you to apply — your skills and experience may be a great fit.

- 25 days holidays as standard plus bank holidays - You can ‘buy’ up to 35hrs of extra holiday too.

- Generous and flexible pension schemes.

- Volunteering days – Two days of volunteering every year for a cause of your choice (fully paid)

- Westfield Health membership, offering refunds on medical services alongside our Aviva Digital GP services.

We also offer a wide range of well-being initiatives to encourage positive mental health both in and out of the workplace and to make sure you’re fully supported. This includes our Flexible by Choice programme which gives our colleagues more choice over a hybrid way of working subject to role, team and client requirements.

We have been ranked in the Best Workplaces for Wellbeing for Large Organisations for 2024!

Our responsible business programmes are fundamental to who we are and our purpose. We’re committed to being a diverse and inclusive workplace where our colleagues can flourish, and we have established a number of inclusion network groups across our business to support this aim.

Our commitment to Social Responsibility, community investment activity and tackling climate change is a fundamental part of who we are. It’s made up of four strands: Our People, Our Community, Our Environment and Our Pro Bono.

As part of the Irwin Mitchell Group’s on-boarding process all successful applicants are required to complete the group’s employment screening process. This process helps to ensure that all new employees meet our standards in relation to honesty and integrity therefore protecting the interests of the Group, colleagues, clients, partners and other stakeholders.

We carry out pre employment screening to establish your eligibility to work in the UK, criminal record and financial checks with our trusted 3rd parties.

The employment screening process will fully comply with Data Protection and other applicable laws.

Irwin Mitchell LLP is an equal opportunity employer.

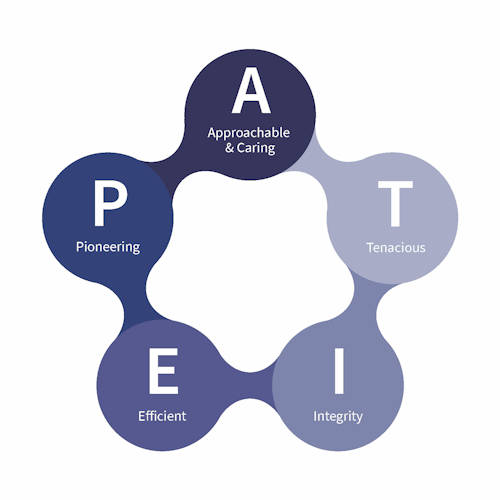

We're proud of our values, and we're looking for people who share them

- Sub-Department:Data Engineering & Platforms

- Sub-Division:Data Architecture & Engineering

- Company:IM LLP

- Working Hours:Full Time

- Vacancy Type:Permanent

- :Flexible

- Salary:Competitive